Charlotte Brunquet: Harnessing AI for Good

Hi Charlotte, what do you do and what brought you to Singapore?

Living abroad for a couple of years had always been in the back of my mind, so when I found a job in Singapore 3 years ago, my husband and I didn’t have to think too long before we decided to embark on this adventure!

After almost 6 years in supply chain roles with P&G France, I joined Bolloré logistics to create the Digital innovation department in Singapore. It was an exciting and challenging experience as the freight & logistics industry is far behind other sectors in their digitalisation journey.

That meant many opportunities for improvement but also a lot of time and energy to drive mindsets change. I was lucky to have a strong management support, allowing me to launch global programs such as RPA (Robotic Process Automation) to automate manual and repetitive tasks. We first implemented a pilot in Singapore, and then deployed the technology in Australia, Japan, Malaysia, China, France and the US.

That’s when I realised that I liked coding and using my technical skills to build business solutions. I thus took online courses on Data science and Machine learning and learnt to code in Python.

Like many of my fellow millennials, I was also looking for a sense of purpose in my job. So when I met the CEO of Quilt.AI, a Singaporean start-up working for both commercial companies and NGOs, I decided to leave the supply chain world and to join the start-up as data scientist.

Today, companies have access to a lot of data, how do you help them improve their use?

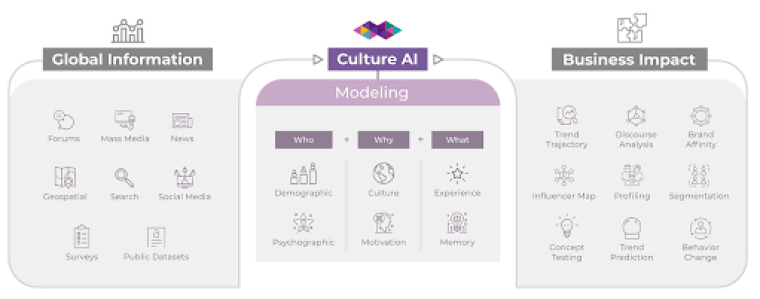

As a tech person, I work in collaboration with anthropologists and sociologists to understand human cultures and behaviours.

Our main objective is to help companies answer strategic questions such as “who are my customers and what are they longing for”, “how am I doing vs my competitors” and “what should be my growth strategy for the next coming years”.

Today, they mostly rely on quantitative surveys and focus groups to get those insights. There are also social media listening tools, but they usually don’t provide deep analysis about consumers.

Working hand in hand with researchers, our team of engineers created machine learning models that recognize emotions from pictures, identify user profiles based on their bio or capture highlight moments in videos. When running those algorithms on large datasets pulled from all social media platforms, we can build a comprehensive and empathetic understanding of people.

After 6 months in the team, I’m still impressed to see how combining the opposite worlds of qualitative and quantitative analysis enables us to create deep insights at a very large scale.

But what excites me the most is to apply these technologies to the non-profit sector. NGOs want to better understand the needs of the communities they are helping, but also how to support them more efficiently and drive behavior changes.

- We have helped foundations understand the anti-vaccine movement in India, by analysing Twitter profiles of users spreading such messages and their followers, as well as Google searches and Youtube videos.

- We built a misogyny model to identify and understand macho behaviours on social media and promote advertising content accordingly.

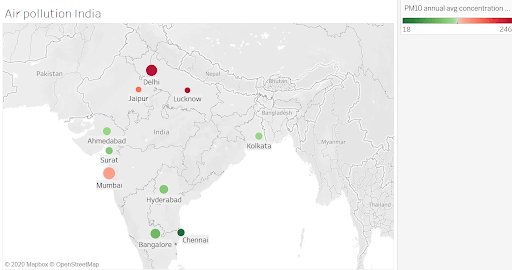

- We worked with UNICEF, CIFF (Children Investment Fund Foundation), and Clean Air Fund to tackle online child pornography, understand malnutritions trends and create air pollution prevention campaigns.

How can AI help us tackle social and environmental challenges?

There are many different ways!

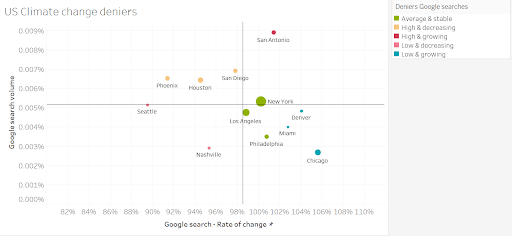

To give you an example, I’m currently working on a climate change product to help institutions and foundations implement behaviour change strategies at a city level.

- First, we assess the vulnerability of each city to different climate issues (air pollution, flooding, temperatures anomalies, etc)

- Then, we use machine learning to analyze the online discourse on climate change topics, via Google searches, tweets, news, blogs.

- Once we understand the level of awareness and type of conversation around climate change in each city, we look at the impact of past campaigns to identify successful visuals, emotions and narrative styles

- Based on this, we can recommend micro-targeted messages and campaigns that will resonate well in the local communities and drive behaviour changes efficiently.

There are many other ways of using AI for good, and we see an increasing number of examples around us every day. Companies like Winnow and Lumitics use computer vision to identify and reduce food waste in professional kitchens.

BeeBryte (interview Elodie Hecq) leverages machine learning to reduce energy consumption in buildings by predicting outside temperature and activity inside the building.

Bayes impact uses AI to help unemployed people to find a job that matches their skillset with the job market. All of this is very exciting!

That sounds terrific! However we also hear a lot about negative impacts of AI, such as job losses, discrimination or manipulation.

How do you ensure that your technology is used “for good”?

Technology itself is neutral, it’s never good or bad. It all depends on how it’s being used. Nuclear fission enabled carbon-free energy but also originated a weapon of mass destruction. The internet gave access to information to almost everyone around the world but also led to creation of the dark web. The same goes for AI. The risk of negative applications doesn’t mean that we have to stop using a new technology and deprive ourselves of its benefits.

At Quilt.AI, we follow a couple of ground rules to ensure we always prioritise ethics and humanity.

- We never do “behaviour change” projects for commercial companies, only for non-profit organisations.

- We don’t share any individual data and we use publicly available data only.

- Finally, we don’t do any studies on people under the age of 18.

Of course, other organizations around the world could be doing the same as us without these priorities in mind.

That’s why it’s urgent for governments and institutions to establish rules that regulate AI on a global scale. In April 2019, the EU released a set of ethical guidelines, promoting development, deployment and use of trustworthy AI technology. The OECD has published principles which emphasise the development of AI that respects human rights and democratic values, ensuring those affected by an AI system “understand the outcome and can challenge it if they disagree”. However, none of those documents provide regulatory mandates, they are just guidelines to shape conversation around the use of AI.

The challenge is that ethics remains a fundamentally international problem, since AI scaling doesn’t have any national border. Some countries may hamper their AI industry’s growth if geopolitical rivals do not follow suit, particularly when national security or business advantage could be at risk. China for example, has not endorsed any of those principles, and its government described AI as a “new focal point of international competition”, and declared its intention to be the world’s “premier AI innovation center” by 2030.

What are the limitations of AI and what role humans still play in this increasingly automated world?

The first thing that we should not forget is that AI is trained by humans. Algorithms learn from decisions and information provided by human beings. They are usually clueless when faced with new situations. Even unsupervised learning does nothing more than creating groups of similar data, but you still need a human to name them. A facial recognition tool can recognize fear or joy on our faces because we fed it with data that we have labeled with our human understanding of emotions.

We are still far away from general artificial intelligence, when machines can replicate the full range of human abilities, and there is no consensus on if and when this will be achieved. What AI lacks to reach this stage is the ability to apply the knowledge gained from one domain to another, as well as common sense. A study by Cornell university gives us an example: the researchers tricked a neural network algorithm trained to recognize objects in images by introducing an elephant in a living room scene. Previously, the algorithm was able to recognize all objects (chair, coach, television, person, book, handbag, etc) with a high performance. However, as soon as the elephant was introduced, it started to get really confused and misidentified objects that were correctly detected before, even if they were located far away from the elephant in the image. In the field of market research more specifically, AI is still not performing well on understanding context, sarcastic tone or irony.

Algorithms help us a lot by identifying trends, prevailing emotions and topics, but we still need human interpretation to take in the full complexity of understanding another human being.

How “dare you” to be a woman in AI?

Since I’ve started my engineering studies, I’m quite used to being surrounded by a majority of men, and I have to say I’ve never seen this as an obstacle or a challenge. I just happen to love maths and science, so I never asked myself any other question than doing what I like. Two of my sisters are also engineers so I guess it doesn’t feel that uncommon to me!

In the professional world, I believe it depends more on the company culture and the people you are working with than the industry itself. I’ve worked in the supply chain and logistics industry before moving into tech and I have to say I felt much less comfortable as a woman in some of my previous positions.

Having said that, I understand it might be intimidating for women to join the tech world which is still mostly male-dominated. Besides, women are usually less confident in their skillset than men, which seems an even bigger challenge in the tech industry that is very broad and fast-changing. There is always something new to learn so it can feel like you’re constantly falling behind and the imposter syndrome is quite common.

How do you bring more diversity?

That’s a very interesting question!

I think we are all responsible for diversity and inclusion at our own level. As a manager, I was intentionally trying to hire different types of profiles, not only gender-wise but also in terms of culture, academic background and personality. It is not that easy and it involves being aware of your own bias. It also requires a bit more effort for the team to work together in the beginning, but it ends up adding a lot of value when people feel comfortable to debate and share their opinions.

In my current team, our objective is to build “empathy at scale”, by creating algorithms that understand all facets of human personalities and culture without bias. A prerequisite for success is to be inclusive from the start and that’s where our team of anthropologists comes into play. We recently built an application that identifies people’s cultural style (hipster, corporate, punk, hippie, etc) instead of the usual gender and ethnicity recognition tools, and people love it!

Charlotte, thank you ! LinkedIn Charlotte Brunquet

Get in touch with us @ womenfrenchtech at gmail dot com

In collaboration with Amel Rigneau & Yanina Blaclard